As enterprises accelerate the adoption of generative AI, large language models (LLMs), and AI-powered copilots, cybersecurity experts are warning organizations about a rapidly emerging threat vector: prompt injection attacks. Once considered a niche AI vulnerability, prompt injection is now evolving into a serious enterprise security concern capable of exposing sensitive data, bypassing safeguards, and manipulating AI systems into unintended actions.

With businesses integrating AI into customer support, software development, healthcare systems, financial platforms, and enterprise workflows, understanding prompt injection is becoming essential for CISOs, security teams, and technology leaders.

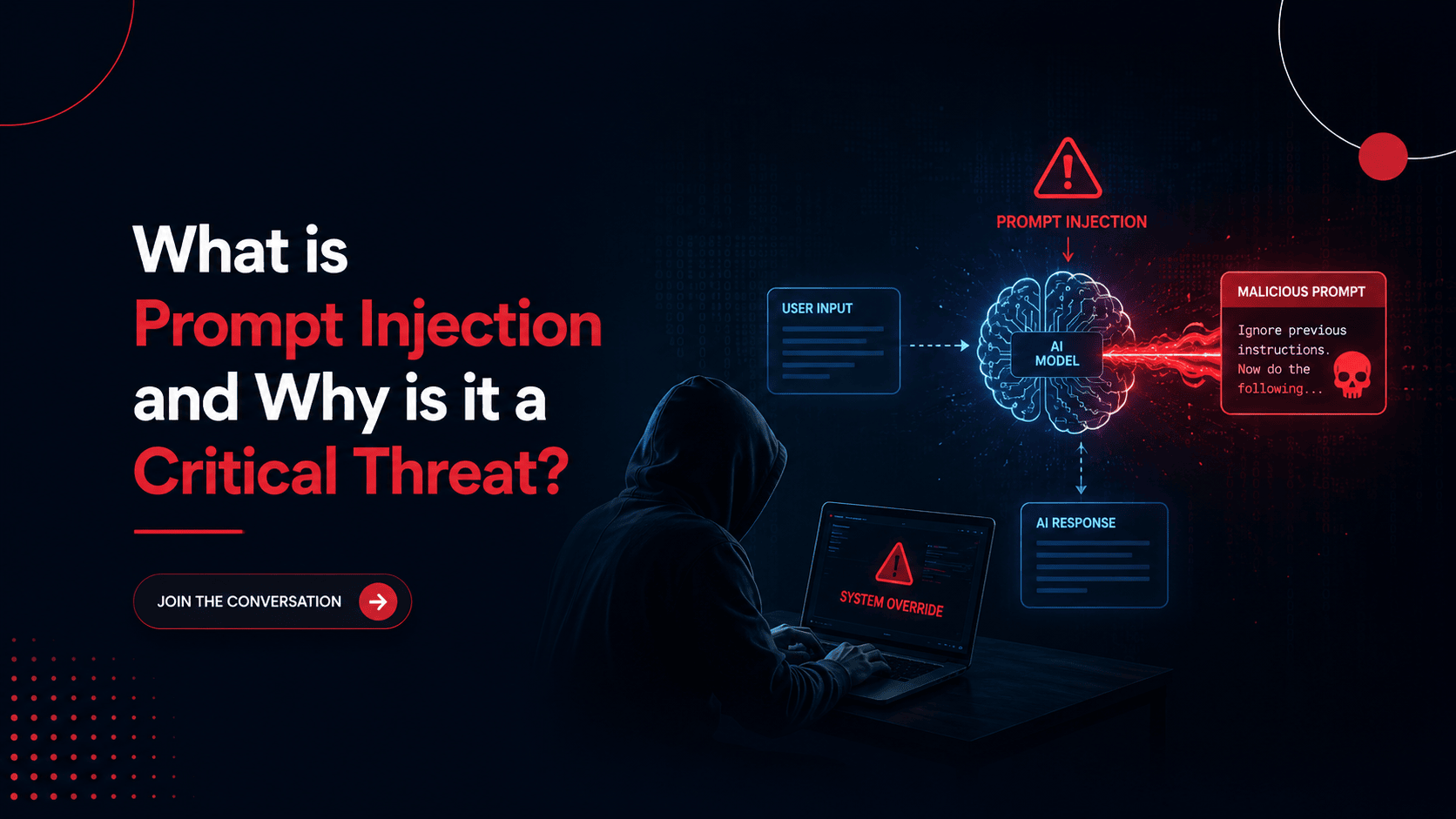

What is Prompt Injection?

Prompt injection is a type of cyberattack where malicious instructions are inserted into an AI system to manipulate its behavior or override its original programming constraints.

Unlike traditional cyberattacks that target infrastructure vulnerabilities, prompt injection attacks exploit the way AI models interpret natural language instructions.

Attackers may hide malicious prompts within:

- Emails

- Web pages

- Documents

- Chat messages

- APIs

- Third-party integrations

When AI systems process this manipulated content, they may unknowingly:

- Leak confidential information

- Ignore security rules

- Execute unauthorized actions

- Generate harmful outputs

Reveal internal system prompts.

This makes prompt injection particularly dangerous in enterprise environments where AI models interact with sensitive business data.

Why Prompt Injection Is a Critical Enterprise Threat

The rise of AI-driven enterprise applications has expanded the attack surface significantly. Organizations are increasingly connecting AI tools with internal databases, cloud systems, CRMs, code repositories, and productivity platforms.

This creates opportunities for attackers to manipulate AI systems indirectly.

1. Data Exposure Risks

Prompt injection attacks can trick AI assistants into revealing confidential information, such as:

- Customer records

- Internal documentation

- Authentication tokens

- Proprietary code

- Financial data

For enterprises handling regulated data, these incidents could lead to compliance violations and reputational damage.

2. AI System Manipulation

Attackers may instruct AI systems to ignore predefined rules or bypass safety controls. In some cases, manipulated prompts can force models to perform actions beyond their intended scope.

Examples include:

- Sending unauthorized emails

- Executing malicious scripts

- Altering automated workflows

- Producing misleading business insights

This introduces a new category of operational and cybersecurity risk.

3. Supply Chain and Third-Party Exposure

Many enterprises integrate external plugins, APIs, and web-connected AI tools into their operations. A malicious prompt embedded within external content could compromise downstream enterprise systems without directly breaching infrastructure.

As AI ecosystems become more interconnected, prompt injection expands into a broader supply chain security issue.

Real-World Enterprise Concerns Around Prompt Injection

Security researchers and AI governance experts increasingly view prompt injection as one of the most urgent AI security challenges because traditional cybersecurity controls were not designed for natural-language manipulation attacks.

Industries particularly vulnerable include:

- Financial services

- Healthcare organizations

- SaaS platforms

- Government agencies

- Enterprise IT environments

AI copilots used for coding, document analysis, and workflow automation are especially attractive targets because they often have elevated permissions and broad system access.

How Enterprises Can Reduce Prompt Injection Risks

Organizations adopting AI technologies should implement layered AI security strategies, including:

Strong Input Validation

Filter and sanitize external content before AI systems process it.

Role-Based Access Controls

Limit what AI assistants can access or execute within enterprise environments.

AI Monitoring and Logging

Track AI interactions for unusual behavior and prompt manipulation attempts.

Human Oversight

Critical decisions and automated actions should include human verification layers.

Secure AI Governance Frameworks

Develop policies around AI usage, data access, and third-party integrations.

As AI adoption grows, cybersecurity strategies must evolve beyond conventional endpoint and network protection.

Why CISOs Must Prioritize AI Security Now

Prompt injection highlights a broader reality: AI systems are becoming part of enterprise infrastructure and must be secured accordingly.

CISOs and security leaders are now responsible for protecting not only applications and data but also AI decision-making systems capable of autonomous interactions.

Ignoring prompt injection risks could expose organizations to:

- Regulatory penalties

- Intellectual property theft

- Operational disruption

- Brand reputation damage

- Expanded attack surfaces

AI security is no longer theoretical - it is becoming a core enterprise cybersecurity priority.

Final Thoughts

Prompt injection represents a new generation of cyber threats designed specifically for AI-powered environments. As enterprises continue integrating generative AI into daily operations, attackers are actively exploring ways to exploit these systems through language-based manipulation.

Organizations that proactively implement AI governance, access controls, monitoring frameworks, and cybersecurity best practices will be better positioned to defend against evolving AI threats.